We continue with the challenge of capturing — and automating — company expertise. Working with customers, we heard this one consistently: feedback from document review rarely makes it back into the template. This time: how Everest closes that loop.

For most teams, the document production process looks something like this: start from a static Word template, generate a draft, send it out for review. Stakeholders — regulatory affairs specialists, clinical leads, subject matter experts — leave comments, suggest edits, and flag gaps. Authors work through each one, incorporating changes into the document. Then it ships.

The master template stays untouched. Updating it is a separate manual effort — and one that rarely happens. The next document starts from the same place. The learning gets lost.

Turning Review Feedback into Template Improvements

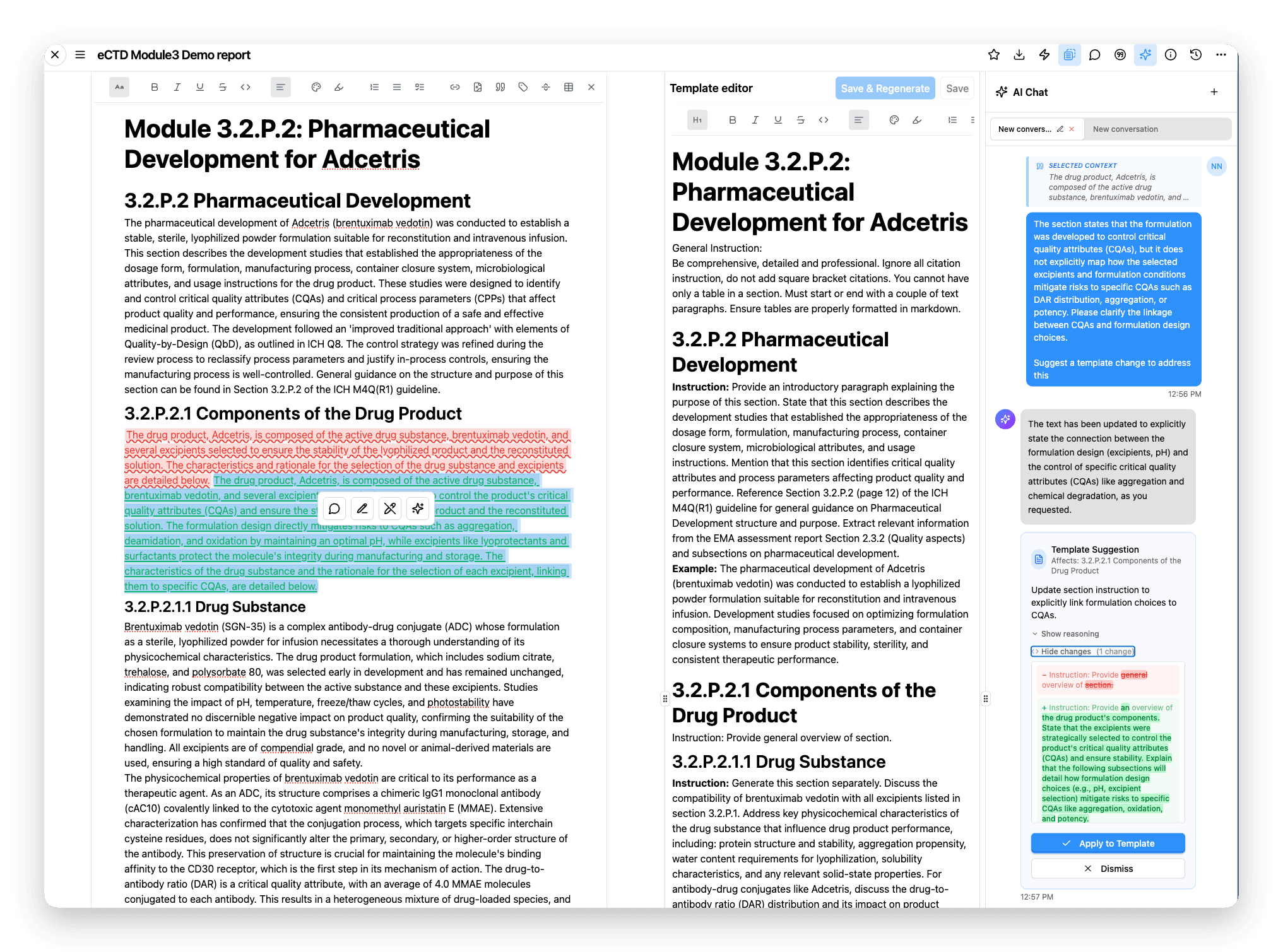

With Everest, this workflow changes fundamentally. Documents are generated from Everest templates — or Blueprints, which are a series of interconnected templates — and reviewed within the platform. All feedback and edits are tracked in one place. As comments are resolved and changes are made, Everest tracks those edits and surfaces them as suggestions for improving the underlying template.

Every suggestion appears as tracked change in the chat sidebar, showing exactly what would change — a safety section updated to reflect revised regulatory guidance, a manufacturing step reordered, a heading adjusted. Authors can apply or dismiss each one individually — nothing is updated without explicit approval. The reasoning behind each change stays visible, so teams build a record of how the template evolved and why.

Document review feedback becomes a template suggestion — showing exactly what would change, with the option to apply or dismiss before anything is updated.

Once accepted, those changes are reflected in the template. The next time the workflow runs, the improvements are already baked in — each iteration produces a better document, and the team's expertise compounds over time.

Next in the Series

AI models can hallucinate numbers — producing results that look correct but aren't grounded in reality. In "Getting the Math Right," we look at how to ensure the numbers in your reports are computed from source data, not generated.